"Deploy AI. Reduce customer support ticket costs by 30%."

You've heard it in a vendor call.

You've read it in a Gartner brief. You've seen it in a deck from a consultant. You've watched it surface in press releases, LinkedIn carousels, and webinars. It's been cited by IBM, repeated by analysts, and copy-pasted into every category report published in the last three years.

And somewhere along the way… it stopped sounding like a statistic. It became furniture. Background noise. Part of the landscape of AI promises that nobody seriously interrogates anymore.

That's the problem with the 30% figure right now. It has been repeated without context for so long, by so many people with so many different agendas, that it lives in a fog.

It's not a lie. But it's not clean truth either. And at this point, a lot of support leaders have silently reached the same conclusion: if you bolt an AI chatbot somewhere into your support stack, you should eventually see something close to a 30% reduction in ticket cost reduction.

That's just how it works now, right?

That assumption is quietly costing a lot of teams real money.

Can AI really reduce ticket support costs by 30%?

Short answer: yes.

But only under specific conditions that the people citing the number almost never explain. And the conditions matter more than the headline.

The long answer:

Research shows that 35% of AI customer service projects never break even. It means for every two companies posting AI savings announcements, there's one quietly absorbing costs they're not talking about in public.

The 30% is real. But it's earned, not automatic. And the gap between the teams that get there and the ones that don't comes down to a handful of conditions that almost nobody explains when they cite the number.

So let's actually answer the question.

The 30% Claim: Where It Comes From

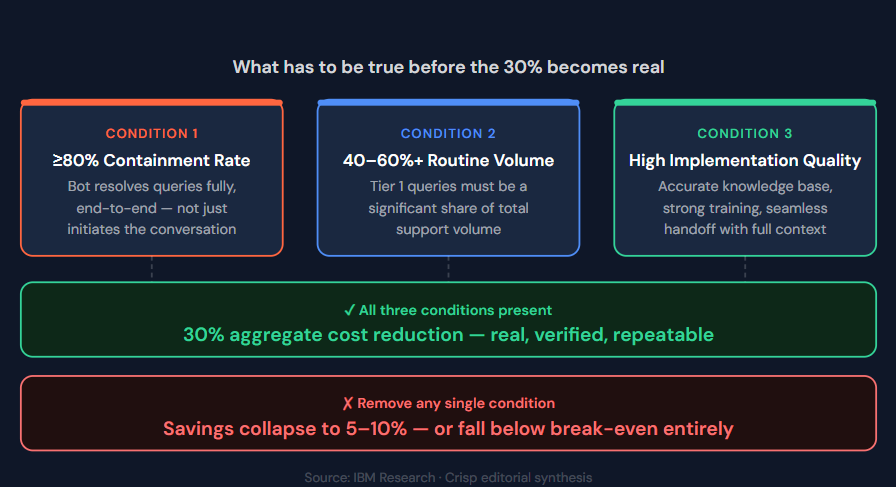

The figure has a real origin. IBM Research found that chatbots handling up to 80% of routine inquiries cut ticket support costs by approximately 30%. Credible source, credible finding. But it comes loaded with three conditions that nearly every deck quoting it quietly drops.

- The chatbot has to contain 80% of routine queries end-to-end, not just start conversations about them.

- Routine queries have to represent at least 40-60% of total support volume.

- And implementation quality has to be high enough that the support bot resolves conversations rather than merely delays them until a human steps in.

When all three are true simultaneously, 30% aggregate cost reduction is real and repeatable. When any one is missing, savings could collapse to 5-10%. Sometimes negative.

What the real numbers look like

The most reliable benchmark data doesn't come from vendor surveys. It comes from companies that publicly disclosed outcomes from real, large-scale deployments.

- According to Gartner, contact center AI is projected to save businesses $80 billion in labor costs by 2026.

- Accenture research found that companies treating customer service as a value center achieve 3.5x more revenue growth while spending less than 1% of revenue more on service.

- Forrester found customers are 2.4x more likely to remain loyal when their issues are resolved quickly.

But that's the macro picture.

Here's what specific deployments actually reported:

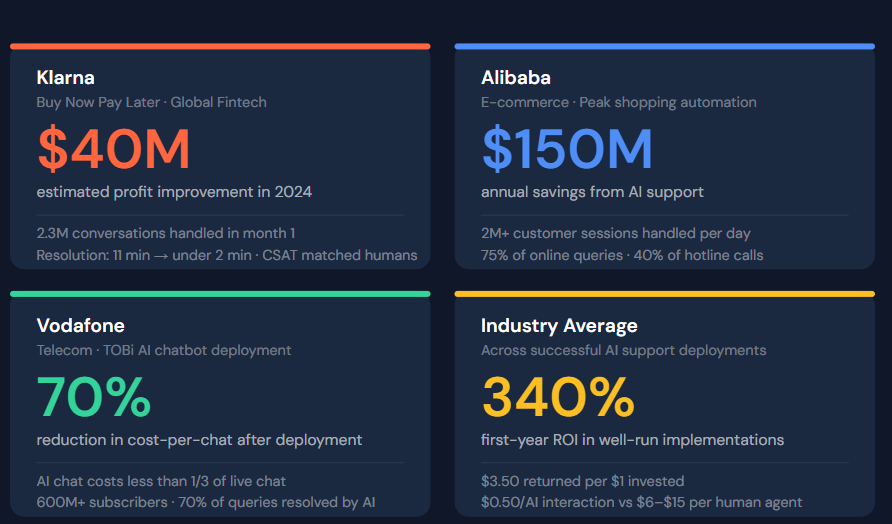

Klarna: In its first month of operation, Klarna's AI handled 2.3 million conversations — two-thirds of all customer service chats. Resolution time dropped from 11 minutes to under 2 minutes. Repeat inquiries fell 25%. Estimated profit improvement: $40 million in 2024. CSAT matched human agent performance.

Alibaba: During peak shopping periods, Alibaba's AI handles over 2 million customer sessions per day, addressing 75% of all online queries and 40% of hotline inquiries. Annual savings: approximately $150 million.

Vodafone: After deploying its AI chatbot (TOBi), Vodafone saw a 70% reduction in cost-per-chat. Serving customers via AI cost less than one-third of live chat with support team.

Industry average: Companies report an average 340% first-year ROI, with $3.50 returned for every $1 invested. The per-interaction cost drops from roughly $6–15 (human agent) to $0.50 (AI chatbot).

How Submagic used Crisp for AI-powered support:

Due to its rapid growth Submagic faced a lot of struggle in terms of customer support :

- High volume: Over 3,500 conversations per month.

- Slow response times: An average response time of 1 hour and 26 minutes, which was overwhelming their support agents and annoy customers'.

By implementing Crisp AI Support Bot, Submagic automated responses to common inquiries (like FAQs, and product questions) and used smart routing to direct complex issues to human agents.

This led to:

- A 95% faster response time (from 1h26m to 5m33s).

- A 65% reduction in new conversations created thanks to Crisp Overlay, the website search widget..

- Stabilized customer satisfaction (CSAT) around 4.3 .

Below, a short explanations shared by Stefan Sekovski, customer support specialist at Submagic, on how they've been leveraging AI to improve customer support.

We've reduced the timing by being able to quickly identify the importance of the user's case. We have clear categorization & prioritization, we use automation and self-service with the help-desk articles our AI help library and with the AI bot answering tier1 questions on the help live-chat. Our agents are able to resolve any issues by having clear decision trees and case examples that they can review for assistance. We have internal com channels (WhatsApp/Discord) where we report/escalate issues and have them resolved super fast. We use also shortcuts to reduce the time between replying and increase the communication efficiency.

In summary, Crisp's AI helped the company to automate and scale its customer support, enabling it to grow efficiently to $8M in Annual Recurring Revenue (ARR).

The metric that quietly kills ROI: deflection vs containment

Deflection rate and containment rate are not the same number. Your vendor will probably present them like they are.

Deflection rate is the percentage of conversations the bot starts without the customer immediately requesting a human. It looks great on a dashboard. It is almost meaningless as an ROI metric on its own.

Containment rate is the percentage of conversations the bot resolves end-to-end — without agent involvement, and without the customer coming back with the same problem. This is the number that determines whether money is actually being saved.

Think of it like a hospital emergency room. Deflection is how many people you turn away at the door. Containment is how many of those people actually got treated, went home, and stayed home. The first number looks impressive in a board meeting. The second number is what matters to the budget.

A bot with a 70% deflection rate but a 35% containment rate means roughly half of those "deflected" conversations return as agent tickets — usually more frustrated, more complex, requiring more handle time than if they'd gone directly to a human. You've now paid for both the AI layer and the extra agent effort. That's the mechanism behind the 35% failure rate.

The real ROI formula

The savings from AI customer service come from three distinct places. Most implementations only measure the first, which is why ROI projections tend to look better on paper than in the quarterly review.

1. Labor cost savings

- Agent hours saved by AI deflection × fully-loaded hourly cost

- This is the largest component and the easiest to measure

2. Productivity gains

- Agent efficiency improvement from AI-assist tools (fewer manual lookups, faster drafting, automated after-call work)

- Typically adds 15–30% to the labor savings figure

3. Revenue protection

- Faster response times reduce churn: customers who get instant answers are less likely to cancel or go elsewhere

- 24/7 availability captures inquiries that would previously have gone unanswered

Minus: Total implementation and operating cost

- Platform license or usage fees

- Implementation and training investment

- Ongoing maintenance and QA

- Internal time spent on knowledge base management

The formula:

ROI = [(Labor savings + Productivity gains + Revenue protection) − Total AI cost] ÷ Total AI cost × 100

For most teams deploying a Support AI chatbot on high-volume Tier 1 queries, the math turns positive within 3–6 months.

What the same 100 tickets actually cost

Abstract percentages are easier to believe than they are to build a budget case with. Here's what the math looks like when you run the same 100 support queries through two operating models.

Read the left side carefully. Without AI, you spend $1,010 on 100 tickets. $520 of that goes toward 65 simple Tier 1 queries — password resets, order lookups, billing FAQs — that your highest-value agents shouldn't be touching. The remaining $490 covers 35 genuinely complex tickets that require judgment, empathy, or troubleshooting. The $520 is dead spend. It's the cost of using premium human talent to do mechanical work.

Now read the right side. With a 75% AI containment rate, those 75 simple tickets cost $37.50 total. The 25 complex cases escalated to agents still cost $350 — because that's what real human support time costs for genuinely hard problems. Total: $387.50. Cost per ticket drops from $10.10 to $3.88.

That's a 61.6% cost reduction on this support ticket mix.

Scale it to monthly volume. At 5,000 tickets per month, the $6.22 savings per ticket becomes $31,100 per month — before productivity gains or revenue protection are counted. That's $373,200 in year-one labor savings alone. Against a $2,000/month platform cost, the ROI math is not difficult to defend in any budget meeting.

Teams that automate that load report meaningful improvements in agent satisfaction, which shows up in retention.

With support teams turnover averaging 30-45% annually and replacement costs running 50-200% of annual salary, even a 10-point retention improvement on a mid-size team represents $30,000+ in real annual savings that never appears in an AI ROI model but shows up clearly in your HR budget.

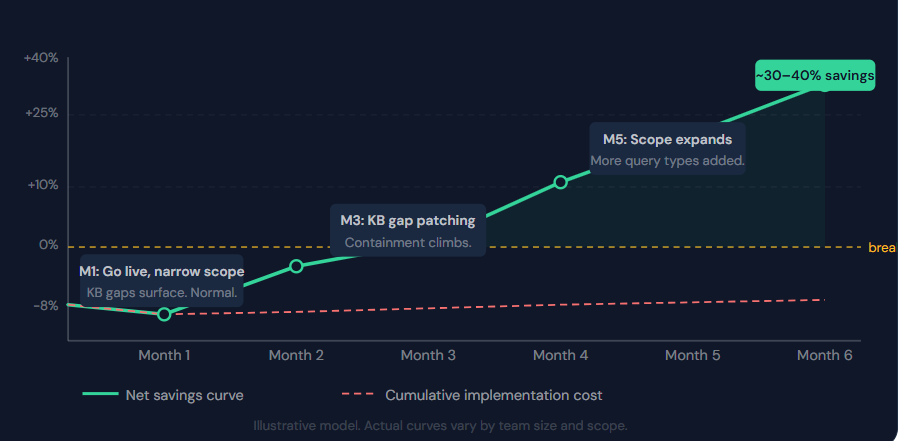

Timeline to ROI: what months 1–6 actually look like

The "3 to 6 month" payback period gets cited constantly. What that summary hides is that the curve is not a straight line. Most implementations look like a ramp with a bump in it — and the teams that misread the bump declare failure right before things start working.

Month 1: the bot goes live on a narrow scope, usually the top five to ten query types by volume. Deflection looks decent. Containment looks disappointing. The knowledge base has gaps nobody predicted. Net savings are close to zero. This is not a sign the project is failing. It's the expected shape of the curve.

Months 2-3: are where the real work happens. Knowledge base gaps get patched. The AI has seen enough real conversation data to handle edge cases more reliably. Containment rate climbs. The queue starts to feel lighter. First genuine savings appear.

Months 4-5: scope expands. Additional query types roll in as the team gains confidence. Productivity tools layer in for agents handling escalated work. Savings accelerate.

Month 6: the platform has processed enough real data to improve continuously. Teams with tight measurement reach 25-40% aggregate cost reduction on the query types in scope. This is when the headline becomes achievable — not at month 1, not even at month 3.

The single biggest mistake CX leaders make is measuring ROI at month 2 and calling it a failure.

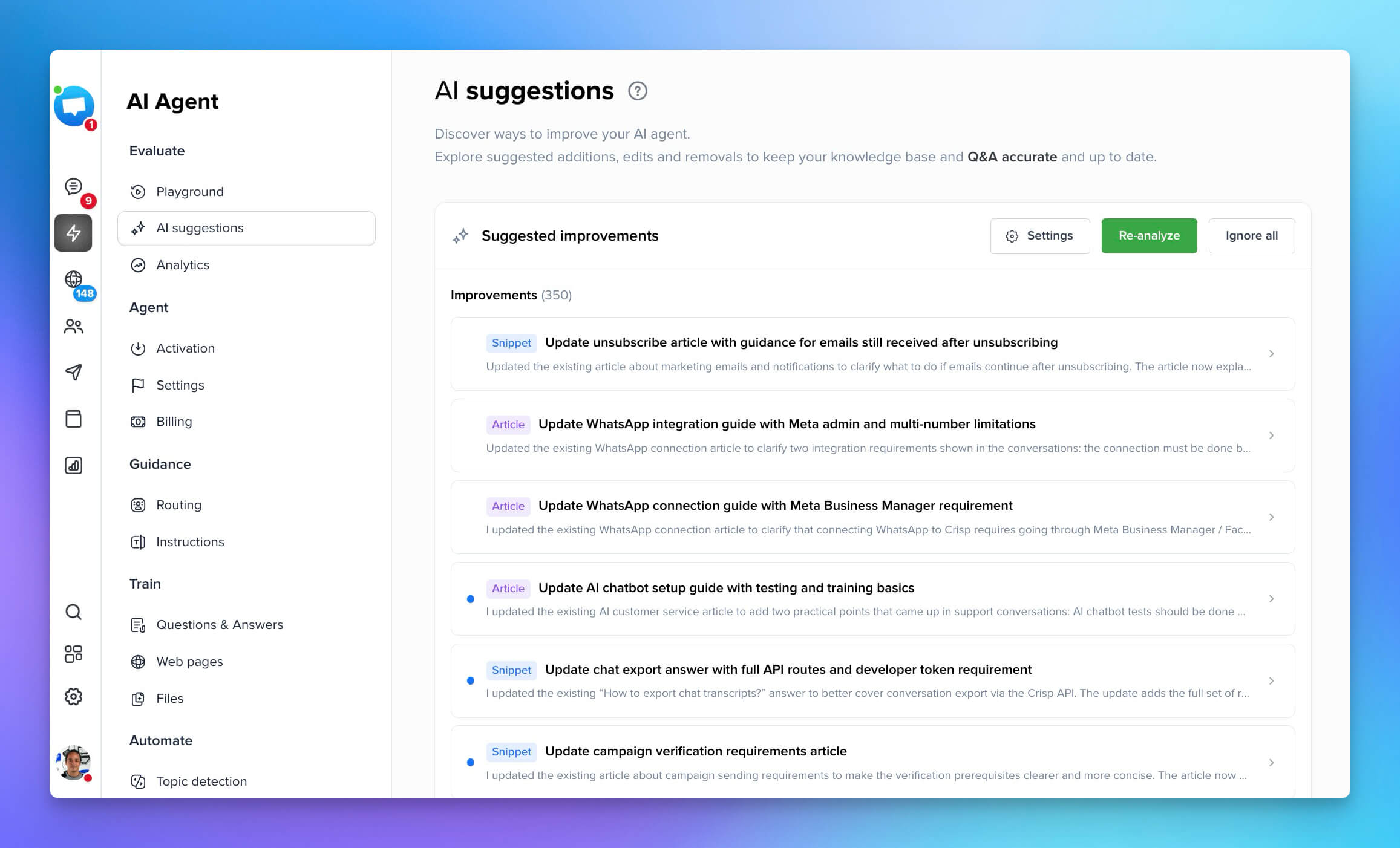

The AI Training Dataset is where AI Support ROI lives or dies

This doesn't get enough attention in AI Support ROI discussions, probably because it's unglamorous.

But it's the single biggest variable separating high-containment deployments from low-containment ones. More than the platform. More than the AI model underneath it.

An AI Support Chatbot trained on thin, outdated, or poorly structured documentation gives wrong answers or escalates the conversation. Wrong answers don't just fail to contain the query. They make it worse. The customer is frustrated and confused, which means the agent handling the escalation faces more handle time, not less. The ROI on that interaction is now negative.

The practical implication: Crisp AI recently made that even easier with AI Suggestions. By looking back at escalated conversations, the AI suggest knowledge improvements through modifications or even newly created articles, that humans just need to review!

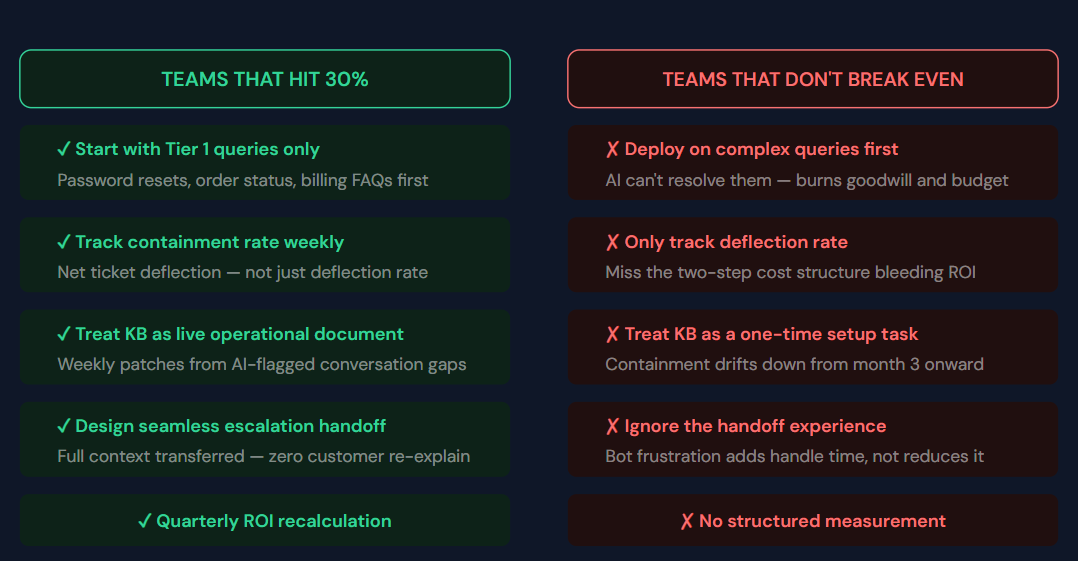

What separates the 30% savers from the ones who don't break even

The 35% of implementations that fail to break even don't fail randomly. They fail in recognizable, predictable patterns. Most of those patterns are visible before deployment if you know what to look for.

- Poor AI training dataset quality. A chatbot trained on thin or outdated documentation gives wrong answers. Wrong answers require agent correction, which costs more than if the query had gone to an agent directly. The ROI is negative.

- Low containment rate. Deflection rate (conversations started by the bot) and containment rate (conversations resolved end-to-end by the bot) are different numbers. Teams optimize for deflection and then discover that 40% of "deflected" conversations return as agent tickets. Real savings require containment.

- Wrong query targeting. Deploying AI on complex queries, it can't handle burns goodwill and costs more to fix. Start with Tier 1 only.

- Ignoring the escalation experience. A chatbot that frustrates customers before handing off to an agent creates more handle time, not less. The handoff must be seamless and include full context.

- Not measuring. Teams that can't measure AI deflection rate, containment rate, and cost per AI interaction can't iterate. Without iteration, performance degrades.

A practical cost-benefit model

Here's a simplified model for a support operation handling 5,000 tickets/month at $20 cost per ticket:

| Metric | Before AI | After AI (40% deflection) |

|---|---|---|

| Monthly ticket volume | 5,000 | 5,000 |

| AI-handled | 0 | 2,000 @ $0.60 = $1,200 |

| Agent-handled | 5,000 @ $20 = $100K | 3,000 @ $20 = $60,000 |

| Total monthly cost | $100,000 | $61,200 |

| Monthly saving | — | $38,800 |

| Annual saving | — | $465,600 |

If the AI Support platform costs $2,000/month, first-year net savings are $441,600. That's not 30% — it's closer to 38% — because the model above runs a 40% deflection rate with a reasonable containment ratio.

>>> Download the AI SUPPORT ROI Calculator

How to actually measure your AI support ROI

Most teams build a business case before deployment and stop measuring the moment the project goes live. That's the same as building a financial model and never opening the spreadsheet again. ROI isn't set at deployment. It's maintained through measurement.

- Establish your baseline before you deploy: Current cost per ticket, average handle time, tickets per agent per day, and CSAT. This is your before number. Without it you have no after.

- Measure net ticket deflection, not deflection rate: Conversations started by the AI that never generated a subsequent agent ticket within 24 hours. Everything else is a leading indicator, not a savings number.

- Run quarterly ROI recalculations: Platform costs change. Ticket volume changes. Fully-loaded agent costs change with headcount. A quarterly refresh keeps your ROI story accurate and your stakeholder reporting credible.

- Add agent retention value at year two: A 10-point improvement in retention on a 20-person team, at $15,000 replacement cost per head, is $30,000 in annual savings that most ROI models never capture. By year two, you'll have the data to make this argument concretely.

Get started with support cost reduction with Crisp

The 30% cost reduction claim is real for implementations that achieve 40–60%+ deflection with high containment rates on well-trained knowledge bases. For everything else, it's aspirational.

The teams that get there are the ones that start narrow (Tier 1 only), measure obsessively (containment, not just deflection), and iterate monthly on knowledge base gaps.

Crisp gives support teams the AI chatbot, analytics, and shared inbox to build toward those numbers without the enterprise overhead. The dashboard surfaces AI deflection rate, containment rate, and agent-handled volume side by side — so you can run this model with your own real data and see exactly where the ROI is building and where it's leaking, before it costs anything.

Frequently asked questions

How long does it take to see a return on investment from an AI chatbot?

Most businesses with high-volume Tier 1 queries see a positive ROI within 3 to 6 months. However, the first month is often a "ramp period" where net savings are low as the knowledge base is refined. By months 4-6, as the AI learns from real data and the scope expands, cost savings typically accelerate.

What is the primary driver of cost reduction when using AI in support?

Significant labor cost reduction through automated resolution and high containment rates for high-volume, repetitive customer questions.

Why do some companies fail to hit the 30% reduction target?

Success depends on high containment; mere deflection without resolution often leads to costly, frustrated human escalations later.

Does AI provide ROI beyond direct labor savings?

Yes, it reduces churn through instant 24/7 service and avoids expensive hiring and training costs during growth periods.

📚 Related articles

How to Reduce Customer Service Costs With AI (Without Cutting Quality) · Call Center Cost Reduction: How AI Cuts the Biggest Line Item in Support · The True Impact of AI Chatbots on Customer Service Costs (2026 Edition) · Replace Tier 1 Help Desk Work With AI Chatbots