Day four.

Marcus had been on the floor for four days. Not four weeks. Four days.

The ticket came. A logistics SaaS customer, frustrated, slightly hostile, and asking about a billing discrepancy that cut across three product tiers, two legacy plan structures, and a promotional rate his company had negotiated eighteen months before Marcus was hired.

A year ago, this ticket would have been escalated. Maybe twice. In a traditional support operation, a four-day agent doesn't touch complexity like this. They're still in the classroom, memorizing refund windows and product feature names, being quizzed on the knowledge base before they're allowed anywhere near a live queue.

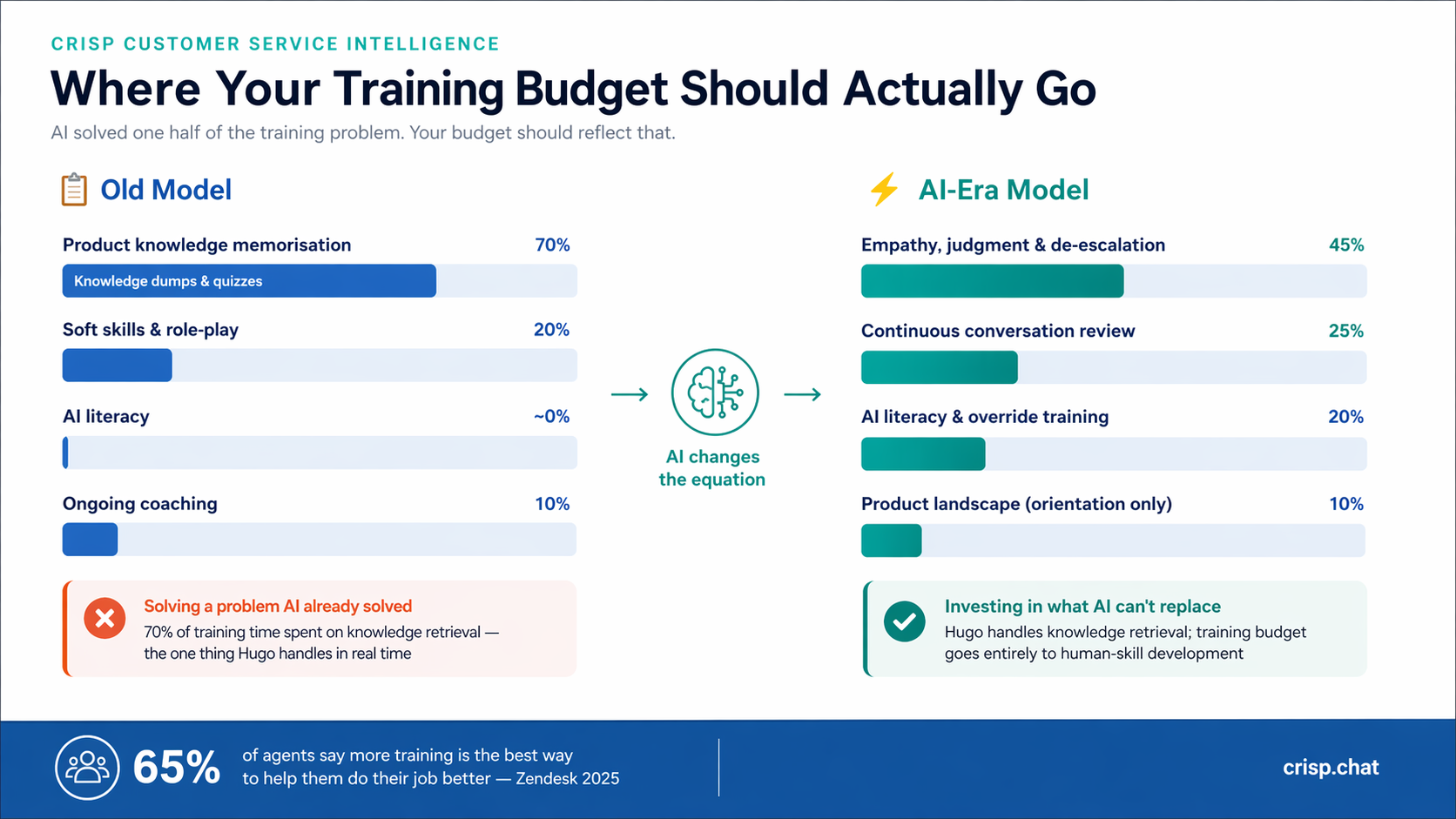

But Marcus wasn't navigating this alone. Hugo, Crisp's AI agent, had already surfaced the customer's plan history, flagged the promotional rate conflict, and drafted a response that covered the core issue. Marcus read it, caught that the customer's emotional register required a warmer opener than the AI had generated, rewrote the first two sentences, and sent it.

Resolution time: eleven minutes. CSAT: five stars.

That moment — the combination of AI surfacing what Marcus couldn't know yet and Marcus reading what the AI couldn't feel — is the new unit of customer service competence. And most training programs are still being built for a world before it existed.

The complexity cliff: what AI changed about the job itself

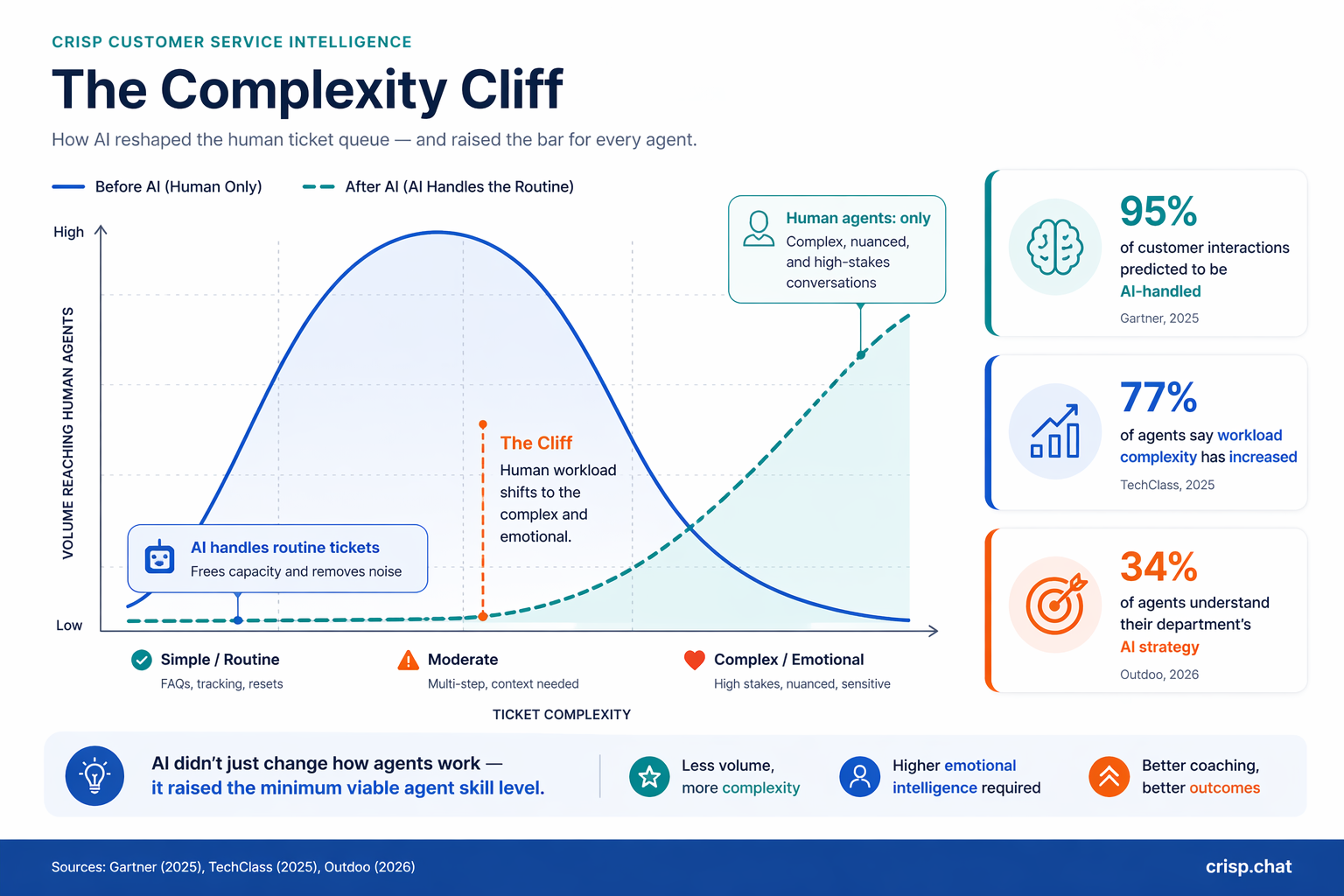

Before AI-assisted support became standard, a new agent's first month looked like a bell curve. Simple tickets first — password resets, order status checks, basic FAQs. Complexity increased gradually as the agent built confidence and product familiarity. The shallow end of the pool existed specifically to develop agents before the deep water arrived.

AI drained the shallow end.

Routine inquiries, the tickets that used to give new agents their footing, are now resolved before they reach a human. What's left in the human queue is structurally harder than it's ever been: edge cases, multi-system issues, emotionally charged escalations, and policy judgment calls that don't have clean answers in any knowledge base.

The numbers confirm the shift.

77% of customer service representatives report that their workload complexity has increased compared to prior years.

At the same time, 95% of customer interactions are predicted to be handled by AI, which means the residual human interactions represent the highest-difficulty tier by definition.

This creates a structural problem that training programs haven't caught up with. Agents are entering a harder job than their predecessors held, with less runway to build skills incrementally, and in many cases with no formal preparation for working alongside AI at all.

Only 34% of agents report understanding their department's AI strategy. The gap is not a technology problem. It's a curriculum problem.

Look at the two curves. The blue line (before AI) forms a big hump—agents are handling a lot of tickets across all levels, especially the simple and moderate ones. The green dashed line (after AI) stays flat on the left, because AI is taking over those routine tickets.

That sharp drop in human volume as you move from simple to moderate complexity—that’s the “cliff.” It’s the point where human involvement suddenly falls off because AI steps in.

Now follow the green line to the right. As ticket complexity increases, the line climbs—fast. That’s because the only work left for humans is the complex, emotional, high-stakes stuff. So even though total volume is lower, the concentration of difficulty is much higher.

The two types of customer service skills

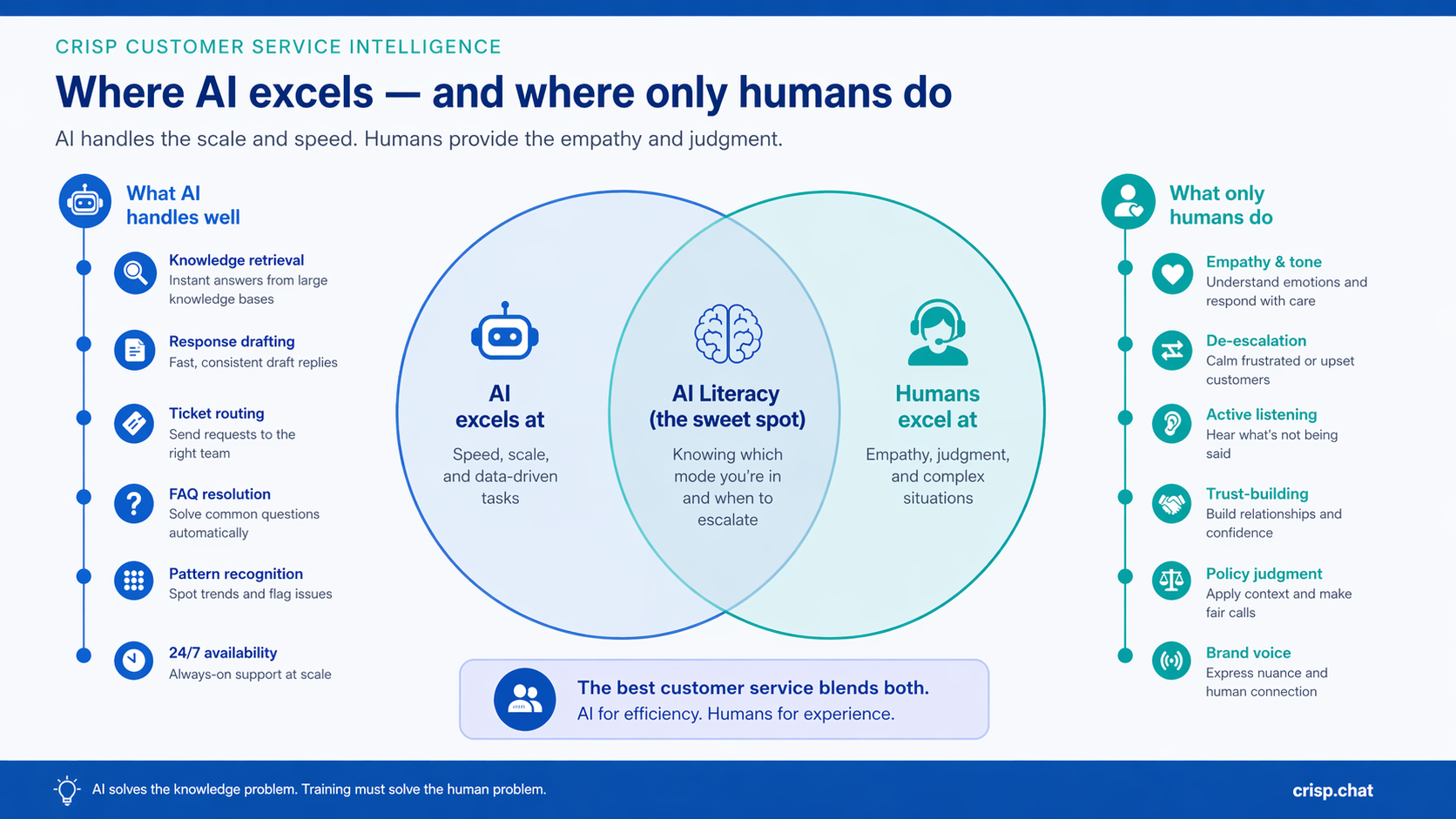

Customer service competence has always had two distinct components. The distinction has always existed. What's changed is which one still requires human training time.

Product and process knowledge — knowing what your product does, how your refund policy works, where to find the answer for a given question. This is learnable from documentation, but difficult to recall accurately under conversational pressure.

Human interaction skills — empathy, de-escalation, active listening, reading emotional register, knowing when to escalate and how to do it without eroding trust. This cannot be found in a knowledge base and cannot be reliably generated by any AI system.

The distinction matters because AI excels at one and is genuinely limited at the other. Think of it like a surgical team. AI is the monitoring equipment — precise, fast, tireless, surfacing data the surgeon needs in real time. The surgeon still has to make the judgment call. You don't train a surgeon to read the monitor. You train them for what happens when the monitor shows something no protocol anticipated.

80% of customers prefer brands that show real understanding — not product accuracy, not speed. Understanding. While nearly half of customers now believe AI can be empathetic in routine interactions, the complex and emotionally charged ones still require unmistakably human responses.

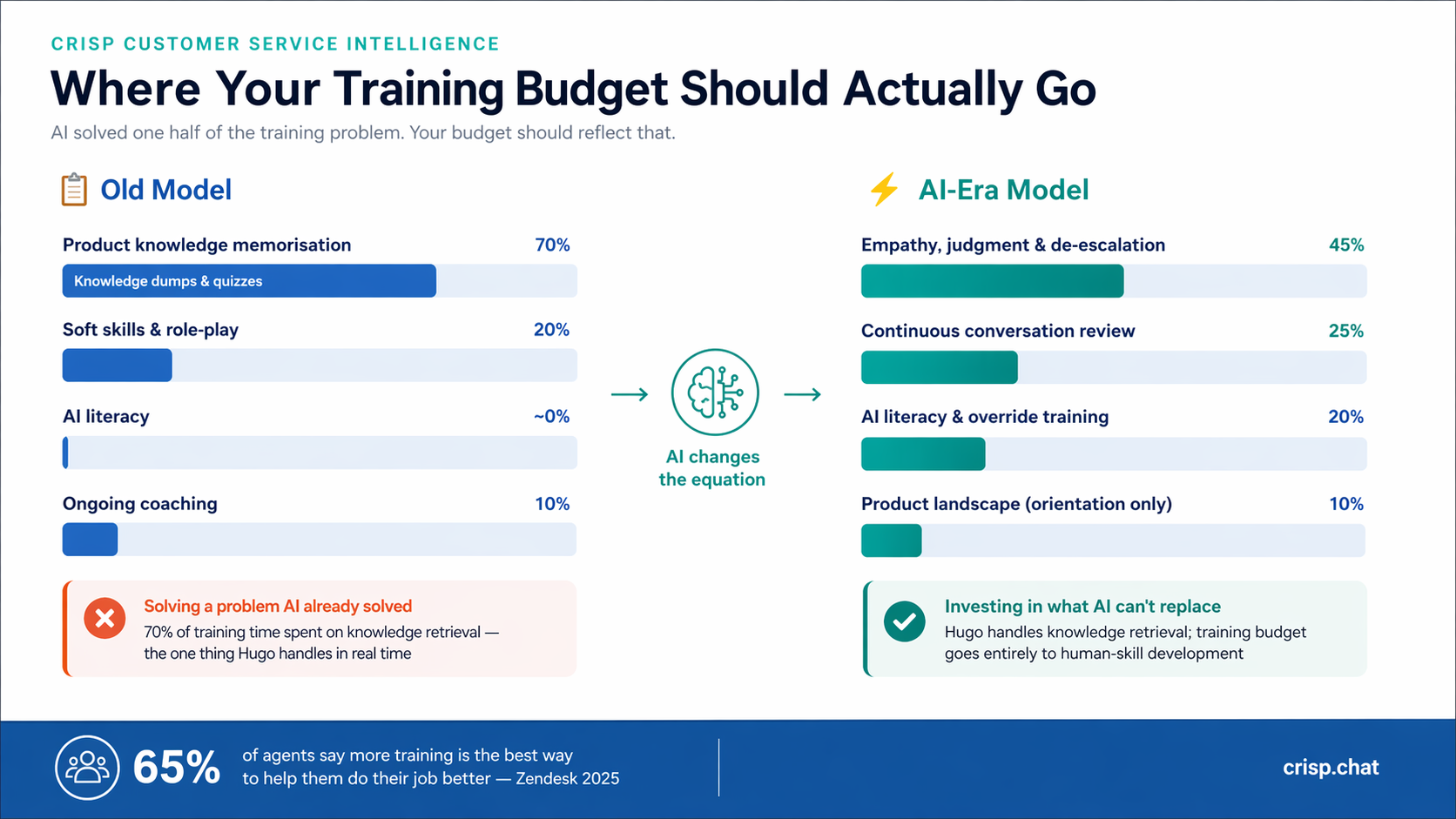

The practical implication: product knowledge retrieval has been solved. Human interaction skills have not. Your training curriculum should reflect that asymmetry.

Why this is a budget problem, not just a curriculum problem

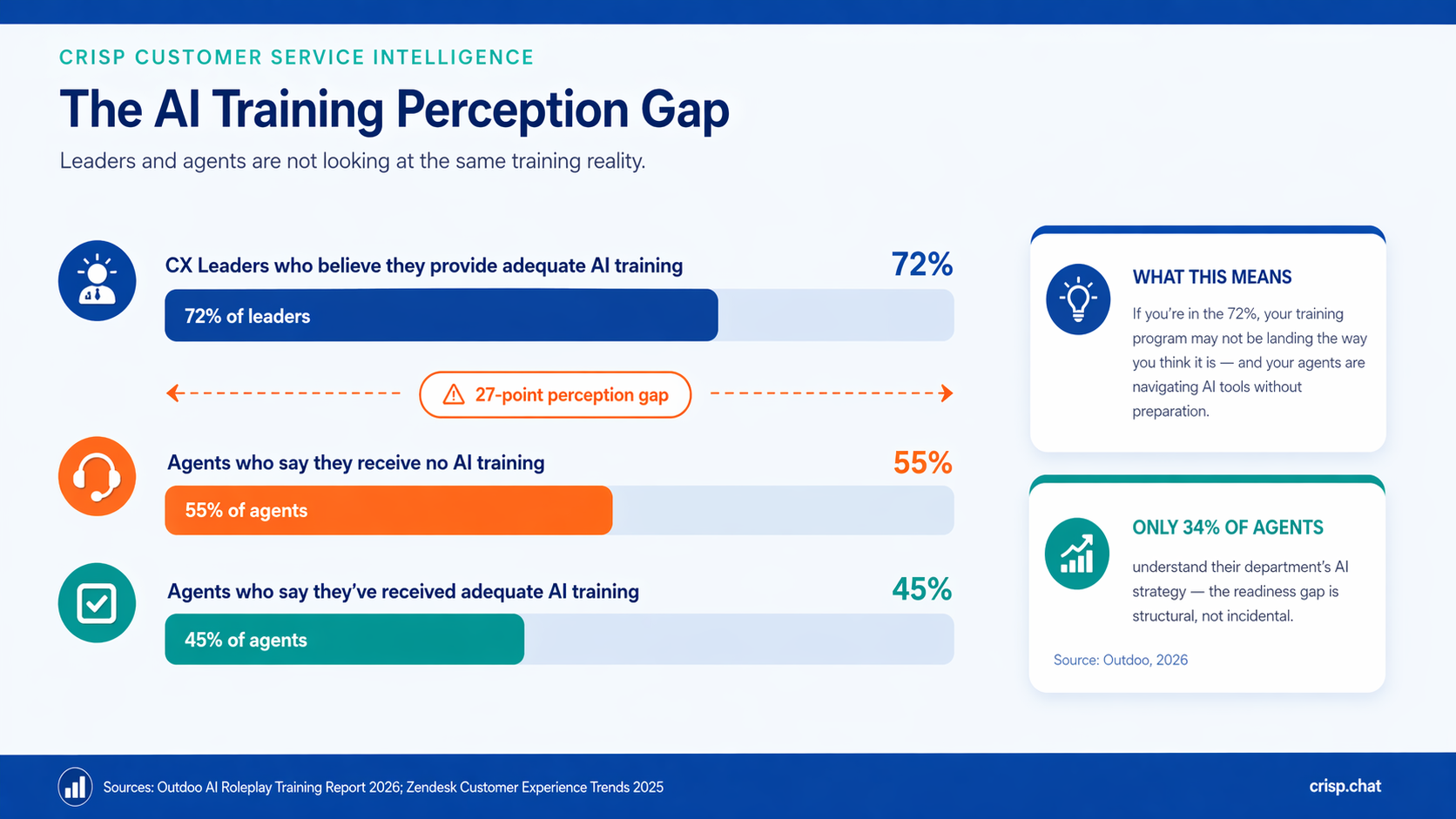

The misalignment between how training is perceived at the leadership level versus the agent level is one of the most reliable blind spots in CX operations.

72% of CX leaders believe they provide adequate AI training. 55% of agents say they receive none.

Only 45% of agents report having received meaningful AI preparation at all.

If you're in that 72%, this data means you may be running a training program that isn't landing the way you think it is. That's a performance and retention issue with a direct cost attached.

Customer service turnover runs between 30% and 45% annually, with the average agent staying just 13.7 months.

In practice, this means a support team is rarely dealing with a one-time training problem. It's perpetually rebuilding. Every inefficiency in the training model, every week spent on product knowledge that AI could surface instead, compounds across every new hire cycle.

The math is unforgiving. Replacement costs run between $10,000 and $20,000 per agent when recruiting, onboarding, and lost productivity are factored together. An eight-week ramp time that could be four weeks is not a huge problem.

This is where the business case for rethinking training sharpens. The question isn't whether to invest in better training. It's whether the investment is going to the half of the problem that still needs it.

The ramp time opportunity

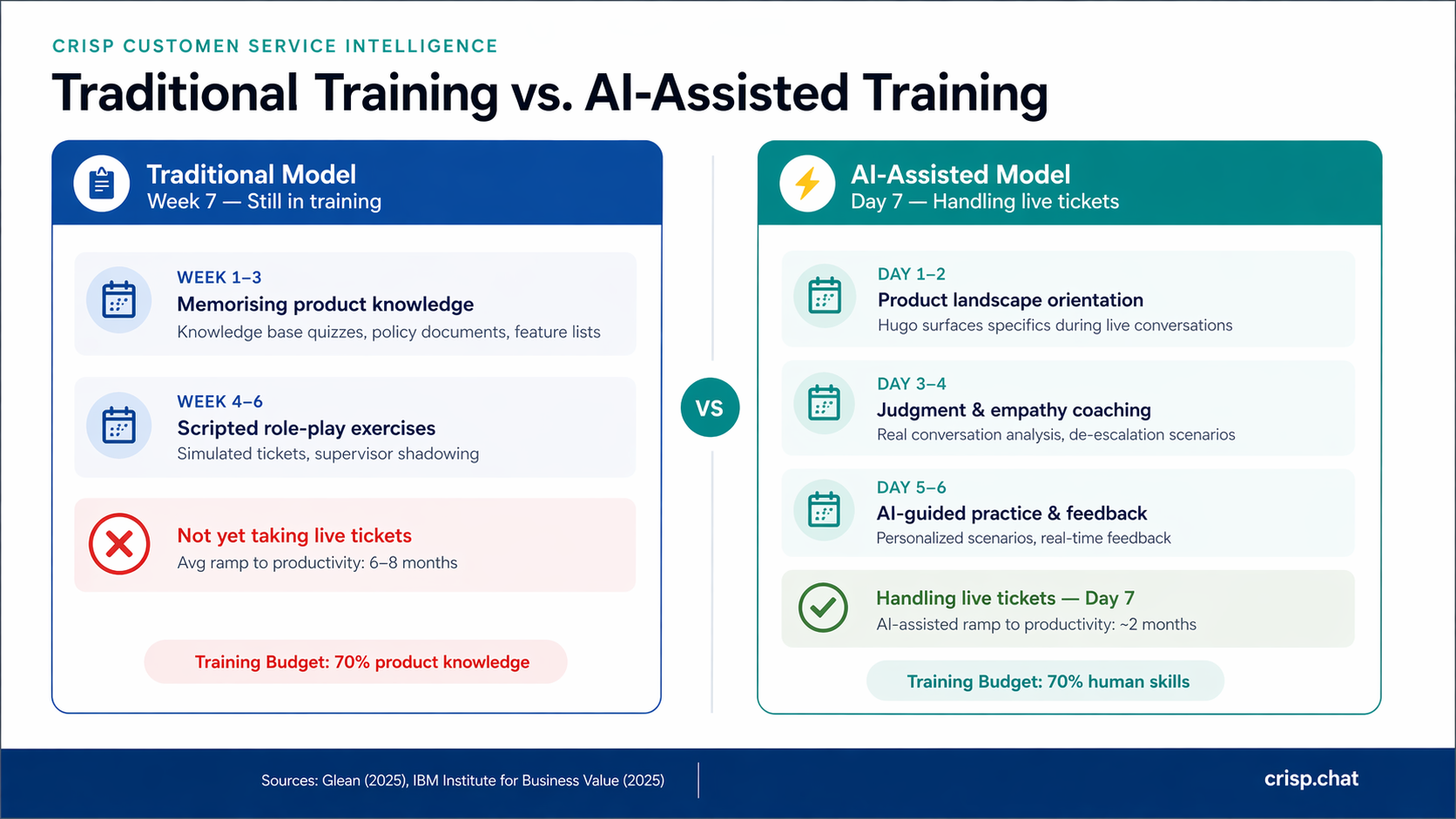

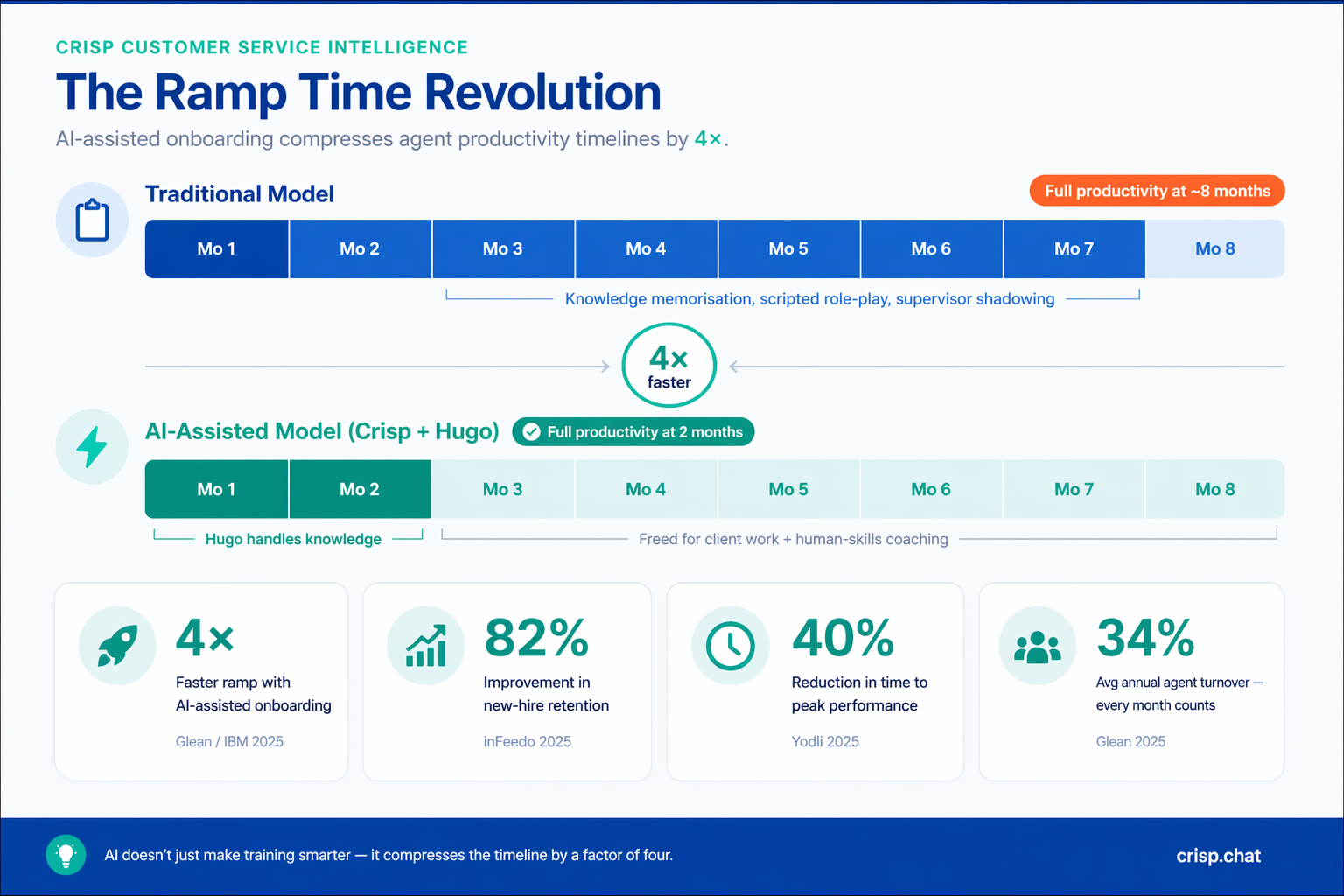

The clearest ROI argument for AI-informed training is not quality improvement — it's speed. Traditional support onboarding takes six to eight months to reach full agent productivity. That's not a ceiling. It's a baseline that AI-assisted programs are already breaking.

AI-accelerated onboarding has compressed the ramp-to-competence timeline from eight months to two — a four-times improvement. IBM documented a 50% reduction in time-to-peak-productivity using AI-enhanced onboarding systems. Organizations with strong AI onboarding infrastructure report 82% improvement in new hire retention and a 40% reduction in the time agents take to reach full performance levels.

The mechanism isn't complicated. When AI handles product knowledge retrieval during live conversations, new agents don't need to hold the entire knowledge base in their heads before they're useful. They can be productive on day four. Which means the training program can focus on building the skills that actually require human development — from week one.

Why this matters for support team leads

65% of agents say more training is the best way to help them do their job better. If training time is finite — and it always is — leaders who spend it on product knowledge retrieval are solving a problem AI already solved. Leaders who spend it on judgment, empathy, and emotional intelligence are building what AI can't replicate.

The practical reallocation: use onboarding to establish product foundations and team culture, then use AI tools to handle ongoing product knowledge retrieval. Reserve your real coaching time for the human skills that separate average agents from excellent ones.

6 Ways to Teach Customer Service Skills in the Age of AI

1. Let AI carry product knowledge — train for judgment instead

The first week of training shouldn't be a knowledge dump. It should be a calibration exercise. Give new agents enough product context to understand the landscape, then trust AI to surface the details when they're needed.

A landmark study published in the Quarterly Journal of Economics found that AI assistance increased agent productivity by 15% on average — with the largest gains, 34%, concentrated among less experienced agents. The mechanism is exactly what you'd expect: AI fills knowledge gaps in real time, which means training can shift from knowledge transfer to judgment-building earlier in the ramp.

The practical reallocation looks like this. Use onboarding week one to establish product landscape awareness — enough to understand the categories of issue, not memorize every answer. Use Hugo to surface the specifics during live conversations. Reserve coaching time for the judgment layer: why do we handle edge case X this way? What does a good de-escalation look like when the customer is right and the policy is rigid? Those questions don't have answers in any knowledge base. They require a human who's been coached.

2. Teach empathy through real conversations — and AI simulation

The least effective way to teach empathy is through scripted role-plays. The most effective way combines two approaches that the traditional training model delivers neither of: real conversation analysis and AI-powered simulation.

Real conversation analysis gives trainers access to exactly the material that drives improvement: filter by ticket type, surface low-CSAT conversations, identify the pattern. Use those conversations as case studies. The distance from reality is zero. The specificity is high. The coaching is concrete and immediately actionable.

AI-powered simulation fills the practice gap that conversation analysis alone can't close. An agent can study a de-escalation interaction — but studying and doing are different disciplines. AI simulation platforms generate adaptive, realistic customer conversations that respond to agent choices, escalate when mishandled, and provide structured feedback after each exchange. Think of it the way commercial aviation thinks about flight simulators. Airlines don't train pilots by analyzing crash data and then putting them in a cockpit. They simulate. Thousands of hours. Customer service deserves the same logic.

Organizations implementing conversation-based training saw 15% CSAT improvements and 20% average handle time reductions within six months.

AI simulation compounds those gains by giving agents repetition without risk — the ability to fail, adjust, and refine in an environment where no customer relationship is on the line.

3. Build product knowledge incrementally, synchronized with live work

Traditional training tries to transfer product knowledge in a single block before an agent ever takes a ticket. The reality is that agents don't need to know everything about your product on day one. They need just enough to handle the tickets they'll actually see, with AI available to fill the rest.

A more effective model is incremental: introduce core product areas progressively over four weeks, synchronized with the ticket types the agent is actually handling. Knowledge encountered during a real ticket embeds far longer than knowledge read in a document. The learning is contextual rather than abstract. The stakes make it stick.

Research on personalized, flow-of-work learning supports this. Spacing content out over days rather than delivering it in a single block helps agents retain 80% more information and take 33% more action on what they've learned. Knowledge encountered during a real interaction isn't forgotten after the quiz.

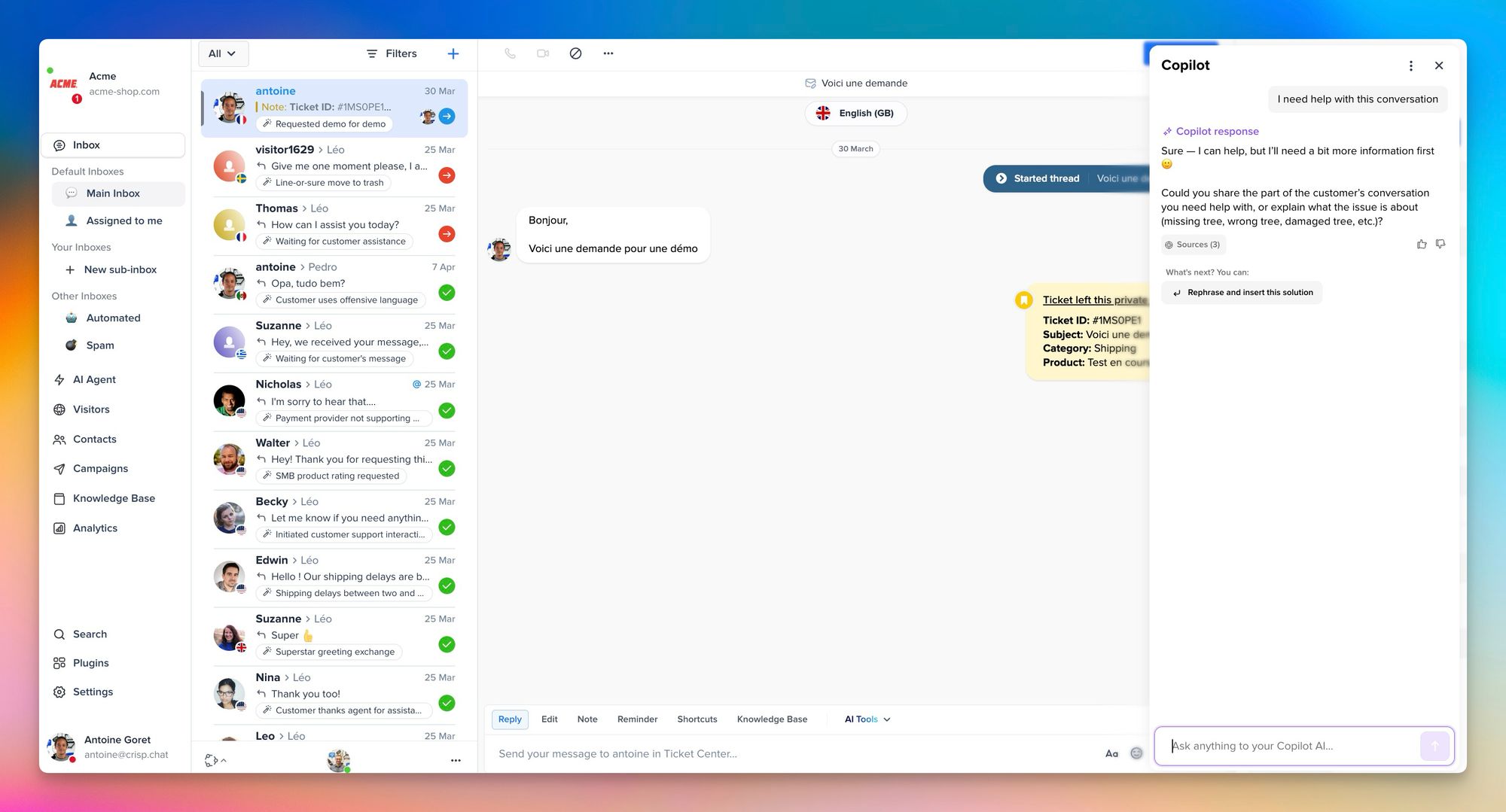

4. Use analytics to identify the specific skills each agent actually needs

Generic training wastes time. If one agent struggles with empathy and another struggles with resolution speed, they need different coaching — not the same module delivered to both. The single biggest inefficiency in most customer service training programs is the assumption that agents have identical development needs.

AI analytics make individualized skill identification possible at scale. By analyzing each agent's handle times, CSAT scores, escalation rates, and conversation sentiment, managers can build a precise skill profile for every team member — and deliver training that addresses their actual gaps rather than assumed ones.

AI-driven coaching helps supervisors identify coaching opportunities based on where agents actually underperform, rather than delivering generic training modules. The result is coaching that's more targeted, more credible to the agent receiving it, and significantly more effective at driving measurable change.

5. Train agents on when not to use AI — not just how to use it

The most nuanced customer service skill in 2026 is knowing when to trust AI and when to override it. An AI suggestion is usually right. But 'usually right' is not 'always right' — and agents who can't distinguish between a confident AI suggestion and a confident AI mistake are a liability, not an asset.

AI literacy training has three components. First, understand what the AI is drawing from — the knowledge base, conversation history, ticket type classification. Second, know its failure modes: thin or outdated KB content, unusual ticket types, and emotionally sensitive contexts where technically correct responses land badly. Third, develop the judgment to read those signals in real time and act accordingly.

The cultural context dimension deserves specific attention for global teams. AI systems are trained on datasets that don't capture every communication norm. Misreading sarcasm, cultural politeness conventions, or emotional register is a documented failure mode — not a theoretical one. Agents working cross-culturally need explicit training on where the AI's confidence outpaces its accuracy.

6. Build a continuous learning loop

Onboarding is where the curriculum gets laid. But the agents who become excellent are the ones embedded in a continuous learning infrastructure, not those who attended a strong training week and then received no structured development for the next eleven months.

The traditional model treats training as an event. The agent arrives, completes a program, and is released to the queue. Development after that point depends almost entirely on whether their manager has time to coach them. In a high-volume support operation, that time rarely exists.

65% of agents say more training is the best single thing their employer could do to help them perform better. The demand is there. The infrastructure usually isn't.

The modern alternative is embedded learning: structured weekly conversation reviews, AI-surfaced micro-coaching in the flow of work, monthly skill profiles updated from live performance data, and a team-wide visibility into what good looks like across ticket types. The goal is not more training time. It's smarter distribution of the coaching capacity that already exists.

Spaced reinforcement makes this tractable. Micro-learning delivered across multiple sessions rather than in a single block has been shown to improve retention by 80% and increase follow-through by 33%. Weekly fifteen-minute conversation reviews, anchored in Crisp Analytics data, deliver more compounding development value than quarterly training days.

How to know if your training reallocation is working

Reallocating training budget from product knowledge to human skills is a strategic decision that needs to be measured strategically. These are the five metrics that tell you whether the shift is working.

- CSAT delta by cohort. Compare CSAT scores for agents trained under the new model versus agents trained under the old one, at the same tenure point — say, 60 days in. Improvement here is the clearest signal that coaching time is landing where it matters.

- Average handle time by ticket complexity tier. Handle time on simple tickets should drop as AI assists more efficiently. Handle time on complex, high-emotion tickets is the real indicator — it should decrease as agents get better at de-escalation and resolution judgment.

- Time to first resolution on complex tickets. This measures judgment quality, not just speed. An agent who resolves a billing dispute correctly in the first interaction is demonstrating exactly the skill the new curriculum prioritizes.

- Ramp-to-productivity timeline. Track how long it takes a new agent to independently handle your top five ticket types without escalation. Under an AI-assisted model, this should compress measurably. Four months instead of eight is a realistic target in the first year of implementation.

- AI override rate over time. A well-calibrated agent should override AI suggestions at a stable, low rate — not zero (which signals passive acceptance) and not high (which signals AI literacy gaps). Track this per agent and use it as a proxy for judgment quality.

What are the most important customer service skills to teach in 2026?

The most important customer service skills to teach in 2026 are empathy and emotional intelligence, de-escalation, active listening, AI literacy (knowing when to use and override AI), and policy judgment; understanding the 'why' behind decisions, not just the rule. Product knowledge retrieval, which consumed the majority of legacy training time, is now largely handled by AI tools. The training curriculum that wins in 2026 covers less of what agents can look up and more of how they respond when looking things up is no longer enough.

The skill set is also expanding in one new direction that most training programs haven't formalized: cross-functional handoff quality. As AI handles more first-contact resolution, the tickets that reach human agents increasingly require coordination across teams: billing, technical, and account management. Training agents to execute handoffs without eroding customer trust is becoming a core competency, not a secondary one

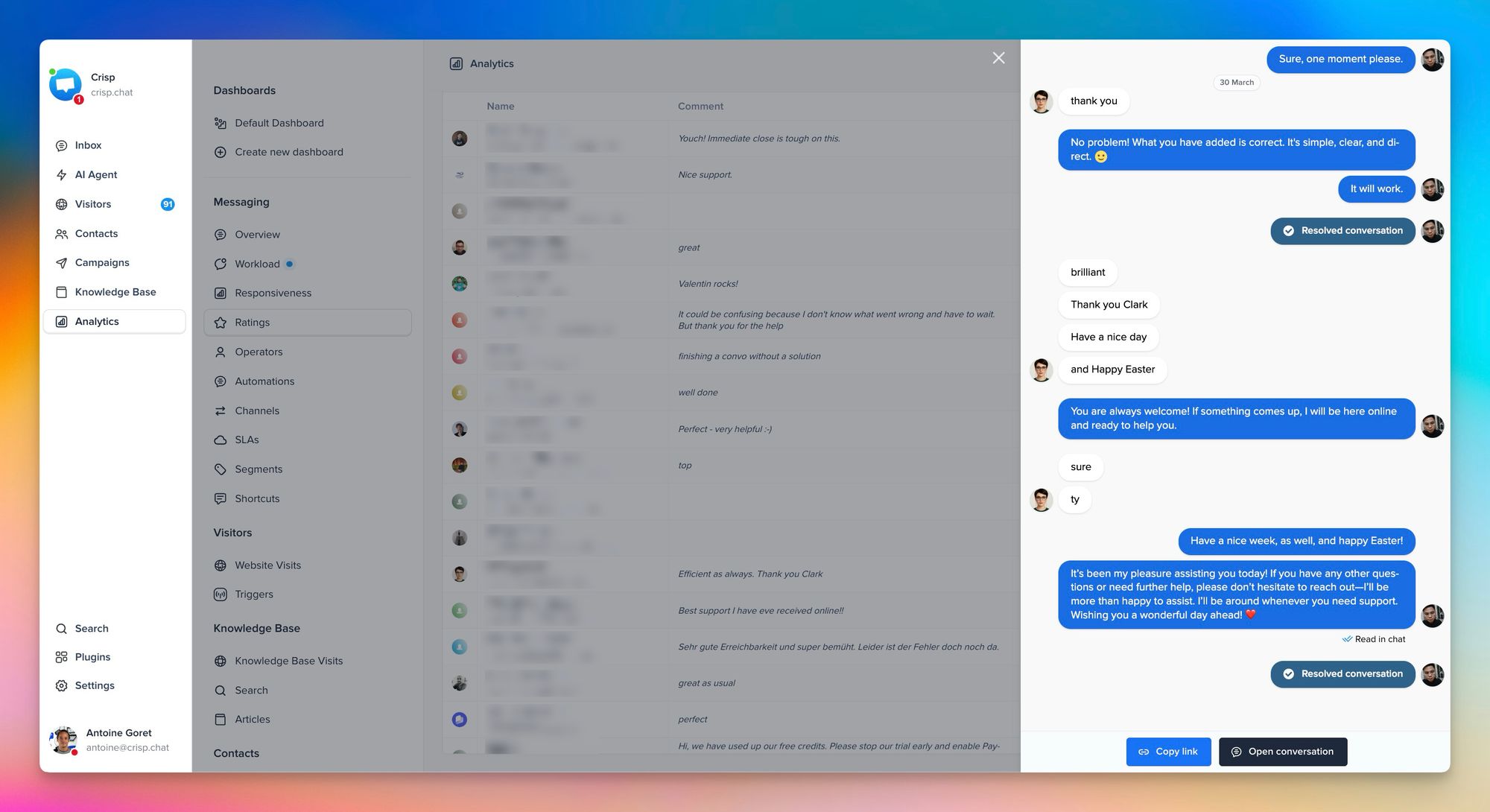

How Crisp supports this training framework

Crisp's product architecture aligns directly with the training model described in this post. Not as a feature set, but as a structural philosophy about what AI should and shouldn't be responsible for in a support operation.

Hugo, Crisp's AI agent, removes product knowledge retrieval from the cognitive load of every agent conversation. This changes what training needs to cover — not as an efficiency optimization, but as a curriculum design decision. When an agent doesn't have to carry the full product knowledge load during a live interaction, they have the working memory available to read the customer, calibrate their tone, and make the judgment calls that determine whether a complex interaction resolves or escalates.

Crisp's Analytics gives trainers the data to make coaching specific. Instead of general feedback after a shift, every coaching session can target the exact ticket types and interaction patterns where each agent underperforms. The difference between 'you need to work on empathy' and 'your CSAT drops twelve points on billing disputes specifically — here are three examples from this week' is the difference between a manager's impression and a development program.

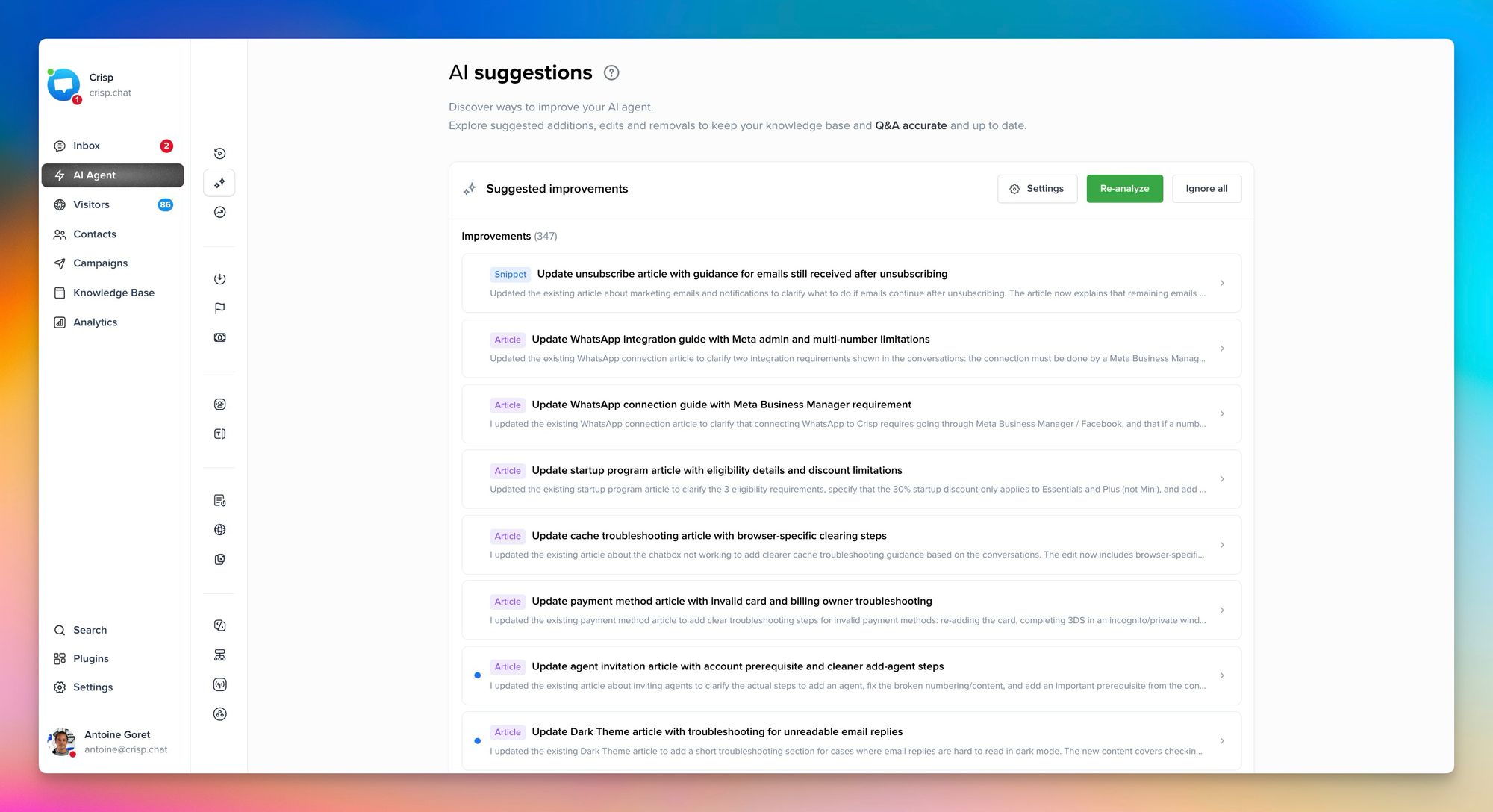

Crisp's Knowledge Base becomes a living training resource. Every well-structured article is a future coaching moment — Hugo surfaces it, the agent uses it, and the knowledge embeds through application rather than memorization. The KB improves over time as AI Suggestions identifies training gaps, and suggest knowledge improvements, which means the AI's retrieval quality improves continuously without dedicated training cycles.

The result is a training infrastructure where AI handles the knowledge problem, analytics handles the performance visibility problem, and the coaching time that remains goes entirely to the human skills that AI cannot replicate.

The New training curriculum

The future of customer service training is not shorter. It's smarter. Less time on what agents can look up. More time on how they respond when looking things up isn't enough. Less product recitation. More conversation analysis. Less generic onboarding. More individualized coaching anchored in real performance data.

The agents who will define what excellent support looks like in the next three years are the ones who understand AI deeply enough to trust it where it's strong and override it where it isn't — and who have been coached intensively in the human skills that no AI model is going to replicate.

That's the curriculum. The teams building it now are the ones whose CSAT scores will look very different in twelve months.

Start building a smarter support team with Crisp today!

Sources

Gartner, AI in customer service and automation trends (95% interaction prediction), https://www.gartner.com/en/newsroom/press-releases/2019-02-25-gartner-says-25-percent-of-customer-service-operations-will-use-virtual-customer-assistants-by-2020

Gartner, Customer service workload complexity and agent experience trends, https://www.gartner.com/en/customer-service-and-support/insights

McKinsey & Company, AI impact on productivity and augmentation of knowledge workers, https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier

IBM, AI-driven onboarding and productivity improvements, https://www.ibm.com/thought-leadership/institute-business-value/en-us/report/ai-in-customer-service

National Bureau of Economic Research, AI assistance productivity gains (15% avg, 34% for junior agents), https://www.nber.org/papers/w31161

Salesforce, State of Service report (AI adoption, agent expectations, training gaps), https://www.salesforce.com/resources/research-reports/state-of-service/

HubSpot, Customer service trends (training gaps, AI usage, expectations), https://www.hubspot.com/state-of-service

PwC, Customer experience survey (importance of human interaction and empathy), https://www.pwc.com/us/en/services/consulting/library/consumer-intelligence-series/future-of-customer-experience.html

Deloitte, Global contact center survey (workforce challenges, complexity, training needs), https://www2.deloitte.com/us/en/pages/operations/articles/global-contact-center-survey.html

Society for Human Resource Management, Employee turnover and retention benchmarks, https://www.shrm.org/resourcesandtools/hr-topics/talent-acquisition/pages/employee-turnover-rates.aspx

Forrester, AI in customer experience and agent augmentation, https://www.forrester.com/report/the-future-of-customer-service/