In 2019, a customer service coach's week looked like this: pull call recordings, score them against a rubric, write feedback notes, schedule one-on-ones, and sit in on live calls twice a week. The job was primarily about quality assurance—ensuring agents hit their script, answered within SLA, and didn't do anything that would generate a complaint.

It was methodical work. It was also largely manual, repetitive, and easily fragmented by the sheer volume of interactions that needed reviewing. The typical coach could monitor, at most, three to five percent of all conversations. The rest went unseen.

By 2024, that job had changed fundamentally. Not because coaching became less important—because AI changed what required human attention.

In teams that deployed AI copilots, chatbots, or automated triage, the easy tickets stopped reaching agents. AI handled password resets, order status checks, FAQ responses, and common billing questions. What landed in the human queue was filtered: complex cases, emotionally charged conversations, edge cases outside the chatbot's training data, and situations where judgment mattered more than speed.

The customer service coach didn't disappear. But the job description rewrote itself.

What AI changed about customer support—and why coaching had to evolve

When AI handles the routine, it doesn't just reduce ticket volume. It changes the nature of the work entirely.

Before AI, a customer service team spent roughly 40 to 60 percent of its capacity on repetitive, low-complexity interactions. This meant two things for coaching: there was a high volume of interactions to monitor for compliance, and many of those interactions were simple enough that coaching them was straightforward—follow the script, check the tone, reduce handle time.

That work is now largely automated. According to research published in the Quarterly Journal of Economics, AI-powered assistance in customer service teams produced a 15% average increase in agent productivity, with the largest gains concentrated among less-experienced workers who were essentially trained in real time by AI suggestions.

This creates a meaningful paradox for coaching: the cases where human coaching adds the most value are now the ones landing in the human queue. Agents are handling fewer, but harder, interactions. And the skills required to handle them well are exactly the ones AI cannot substitute for: emotional judgment, contextual escalation decisions, and the ability to build trust with a frustrated customer.

A customer service coach in an AI-driven team is no longer primarily a quality assurance function. They are an expert in the cases AI can't handle—and a translator between AI capabilities and human agent development.

The new coaching mandate: five dimensions that matter now

- Complex case judgment is the first and most urgent shift. The interactions that reach human agents now are systematically harder than they used to be. Coaches need to build playbooks for edge cases, not just standard scenarios. This means reviewing resolved complex tickets regularly, identifying patterns in where agents hesitate or escalate unnecessarily, and building scenario libraries that help agents develop judgment faster than experience alone allows.

- Emotional intelligence and de-escalation is the second dimension. When a customer reaches a human agent in an AI-first support stack, it often means something went wrong upstream—the chatbot misunderstood them, they're frustrated after multiple touchpoints, or the situation is genuinely high-stakes. According to AIHR, agents dealing with higher-complexity emotional interactions consistently report higher burnout risk when they don't receive coaching on de-escalation techniques. The coach's role here is part teacher, part therapist: building skills that prevent agent burnout while improving customer outcomes on the hardest interactions.

- AI output calibration is the new coaching dimension that didn't exist five years ago. When an agent accepts an AI-suggested response without reading it carefully, or habitually rejects good suggestions, the team underperforms relative to its potential. Coaches now need to train agents on how to work effectively with AI suggestions—when to accept, when to edit, and when to override. This meta-skill is the difference between a team that benefits from AI and a team that merely has AI deployed. The same QJE research found that the teams with the largest productivity gains were those where agents actively calibrated their use of AI suggestions rather than accepting them passively.

- Agent career development and retention is the fourth dimension. As routine work shifts to AI, agents in high-performing teams often ask: what does my growth path look like? The coach becomes a crucial retention factor—the person who helps agents see their development trajectory and understand how their skills are evolving alongside the tools they use. According to AIHR, agents in teams with structured coaching programs report 40 to 60 percent higher job satisfaction. That gap closes significantly without active coaching, even when AI tools are in place.

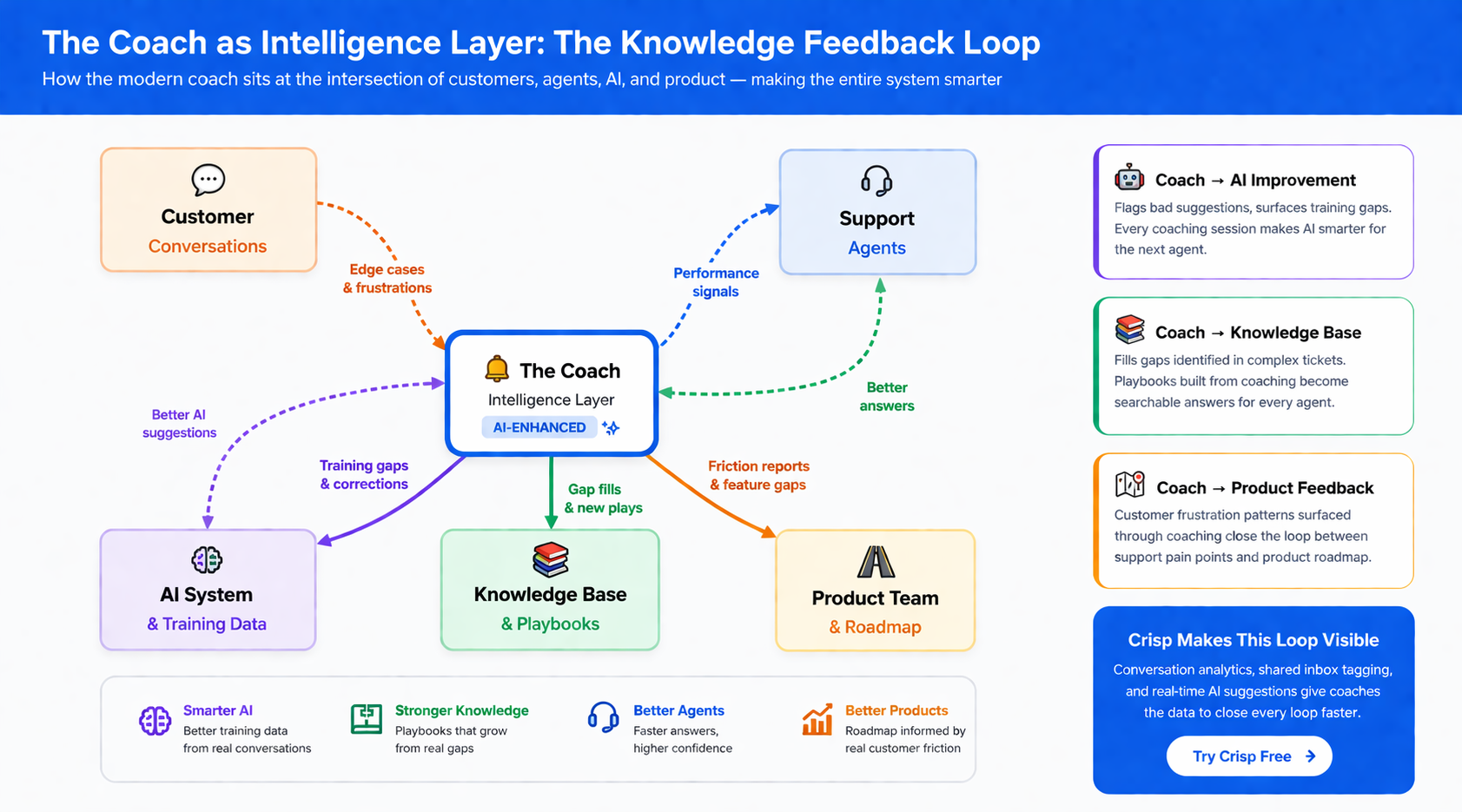

- Knowledge synthesis and feedback loops complete the picture. Coaches now sit at the intersection of customer insights and product knowledge. They see the edge cases, the frustrated customers, the gaps in the knowledge base. A modern customer service coach synthesizes this information and feeds it back into the knowledge base, the AI training data, and product feedback channels. They are, in a meaningful sense, the intelligence layer that makes AI smarter over time.

A roadmap for building a modern customer service coaching practice

Phase 1 — Audit the current coaching model (Weeks 1–2)

Map where coaches currently spend their time. How much is QA review of simple tickets? How much is handling escalations that should be AI-handled? How much is genuine coaching? In most teams, 60 to 70 percent of coaching time is spent on work that AI can now automate or handle directly. Quantifying this is the starting point for everything that follows.

Phase 2 — Redesign the coaching focus (Weeks 3–4)

Shift the coaching model from volume-based monitoring to complexity-based development. Define which ticket types require coaching attention, build a complexity tier for the human queue, and create a coaching rubric specific to the interaction types your team now handles. This is not a cosmetic change—it means rewriting the QA scorecard entirely.

Phase 3 — Build an AI calibration training program (Month 2)

Create a structured program for teaching agents to work effectively with AI suggestions. This doesn't need to be elaborate: a library of annotated example conversations showing effective and ineffective AI suggestion usage, combined with weekly debrief sessions where agents review their own AI interaction patterns, is enough to create a meaningful productivity lift. According to IntouchCX, organizations with structured AI adoption programs generate $3.50 in value for every $1 invested—with calibration training being one of the highest-return components.

Phase 4 — Establish feedback loops (Month 3)

Set up a systematic process for coaches to flag knowledge base gaps, product issues, and AI training opportunities surfaced through coaching sessions. This turns coaching from a cost center into a continuous improvement engine for the entire support stack. Weekly coach-to-product feedback sessions, even brief ones, close the loop between customer frustration and platform improvement faster than any automated process.

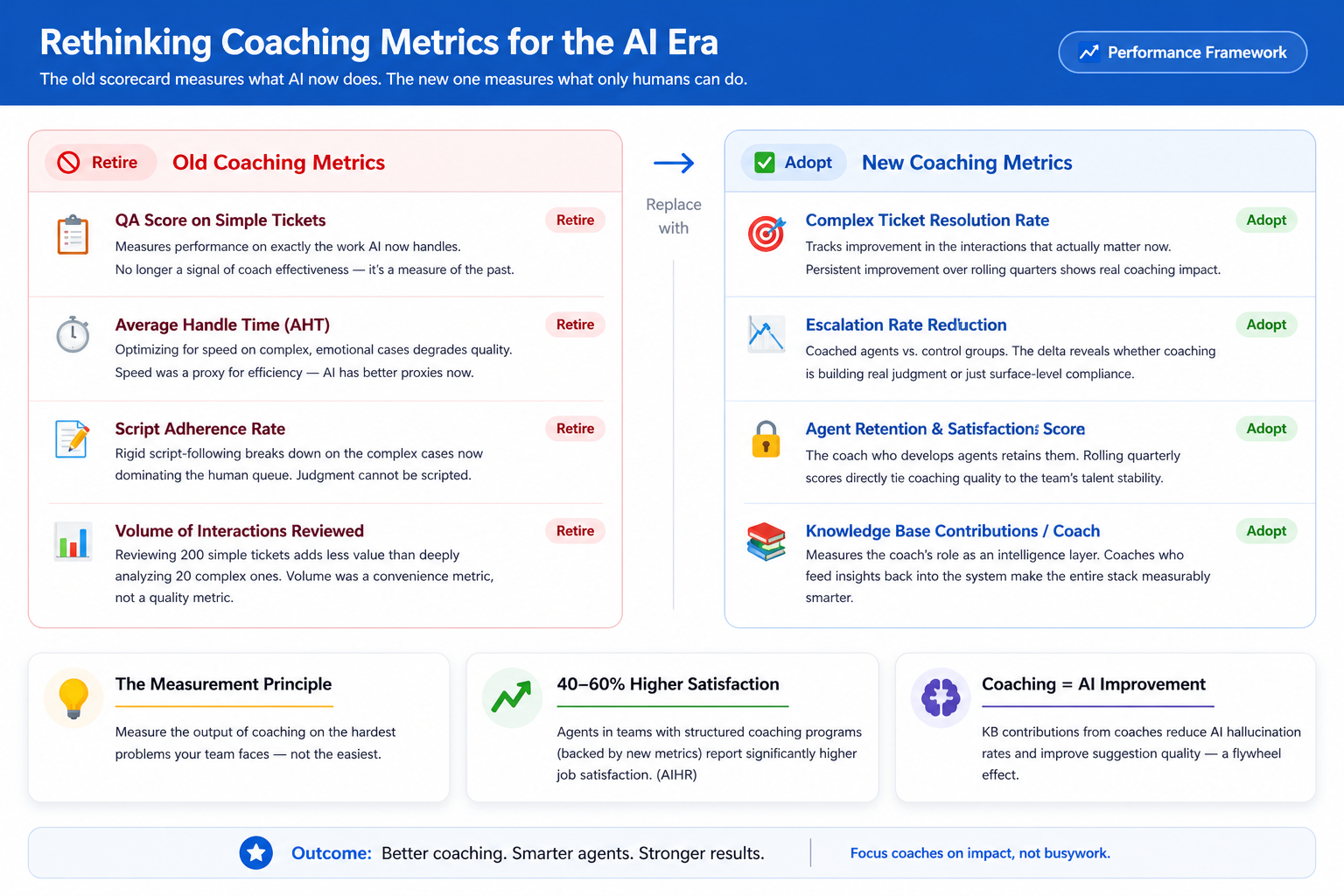

Phase 5 — Measure coaching effectiveness differently

Stop measuring coaching output in QA scores on simple tickets. The new metrics that matter are: improvement in complex ticket resolution rates per agent, reduction in escalation rates for coached agents versus control groups, agent retention and satisfaction scores over rolling quarters, and the quality and frequency of knowledge base contributions per coach. These metrics reflect what excellent coaching in an AI-driven team actually produces.

How Crisp supports the modern coaching model

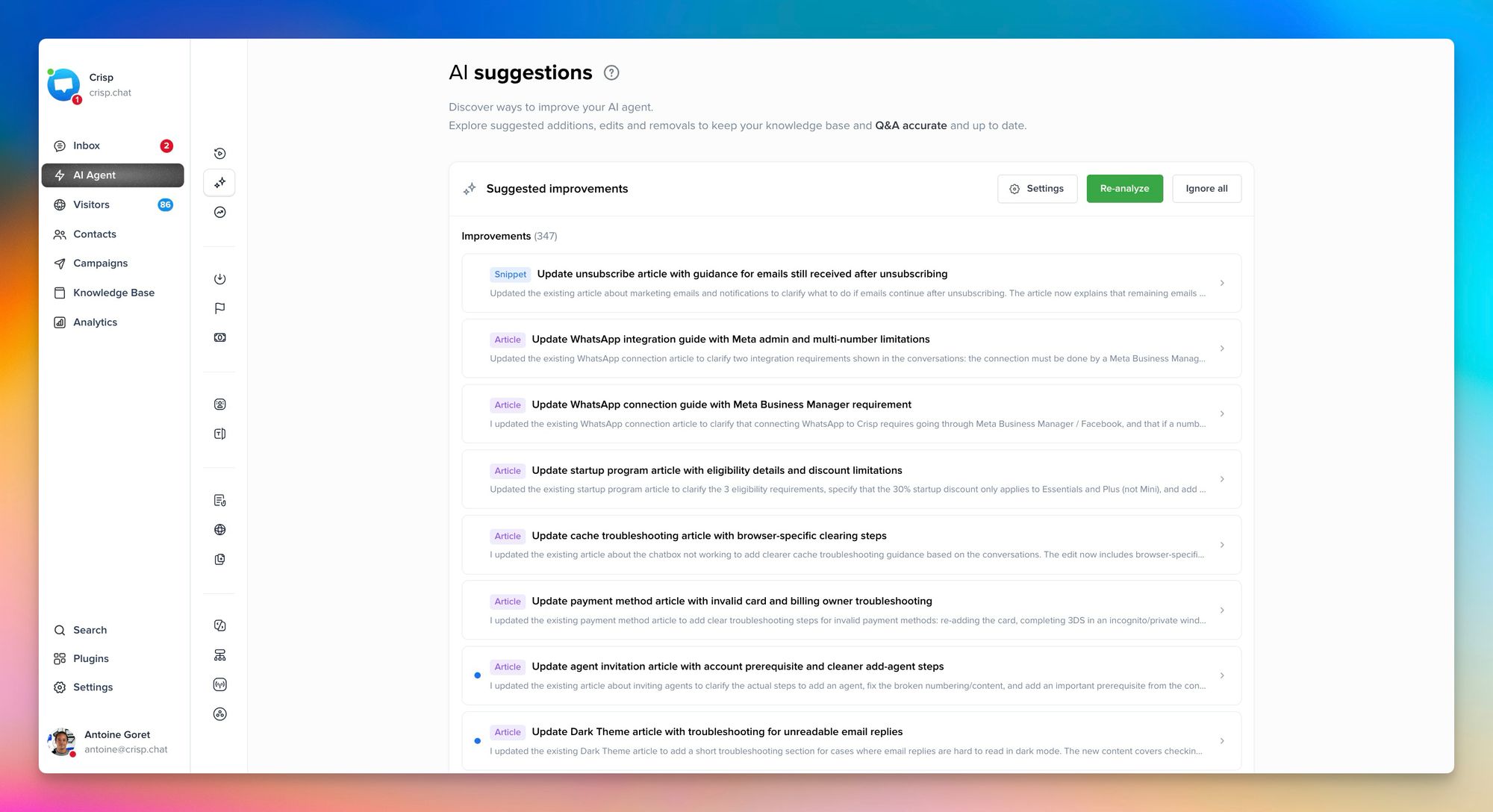

Crisp gives customer service coaches the visibility they need to work effectively in an AI-driven environment.

The conversation analytics dashboard shows exactly where individual agents are struggling: high escalation rates on specific ticket types, slow response times on complex interactions, patterns of passively accepting AI suggestions without editing. This turns coaching from intuition-based to data-driven—and makes coaching sessions far more specific and actionable.

The shared inbox and conversation labelling let coaches build curated case libraries, organizing resolved complex tickets by type and outcome directly inside the tool agents use every day. When a new agent handles their first angry-customer escalation, the example of how a senior agent handled the same situation two months ago is one search away.

And because Crisp's AI layer surfaces knowledge base answers during conversations, coaches can identify knowledge gaps in real time: when an agent searches for the same article repeatedly, or when the same AI suggestion appears frequently, it signals exactly where coaching attention is needed next.

The result is a coaching practice that scales with the team—and that makes the AI tools better, not redundant.

The coaches who can work alongside AI wins!

The customer service coach is not a relic of a pre-AI world. They are one of the most important roles in an AI-driven support team — precisely because AI cannot substitute for the judgment, development, and knowledge synthesis that effective coaching provides.

A jazz musician does not lose value when someone invents auto-tune. They become more valuable because what they do is exactly what auto-tune cannot replicate. The improvisation. The read of the room. The response to what is happening in real time. AI handles the sheet music. Coaches build the musicians who can play without it.

What changes is the focus. Less time reviewing basic interactions that AI now handles. More time building the skills that determine whether complex interactions become loyalty wins or churn. More time calibrating how agents work alongside AI. More time synthesizing the feedback that makes the entire system smarter.

The coaches who figure this out will define what excellent customer service agent training looks like on the other side of the AI transition.

Frequently Asked Questions

1. Is the customer service coach role at risk of being replaced by AI?

No — and the data points in the opposite direction. AI is eliminating the routine work that previously crowded out coaching, not replacing coaching itself. What it does replace is the QA-on-simple-tickets function that consumed 55 to 70 percent of most coaches' time.

2. What does "AI output calibration" actually mean in practice, and why does it matter?

It means training agents to make active decisions about AI suggestions rather than passive ones. Every time an agent accepts, edits, or overrides an AI-suggested response, they are making a judgment call. When that judgment is good, the team outperforms. When it is reflexive — either blindly accepting or reflexively rejecting — the team underperforms relative to what the AI investment should produce.

3. How should a support team restructure its coaching metrics when adopting AI?

Retire any metric that measures performance on work AI now handles — QA scores on simple tickets, average handle time on routine cases, and script adherence rates. Replace them with metrics that reflect what coaching in an AI-first environment actually produces.

4. How long does it take to see measurable results from a restructured coaching program?

Teams that have made the metrics transition and implemented structured AI calibration programs consistently report measurable improvement in complex ticket resolution within six to eight weeks — significantly faster than the six-month cycles typical under the old QA-first model.

5. What is the coach's role in making the AI system itself better over time?

This is the most undervalued dimension in modern coaching. Coaches sit at the intersection of customer frustration, agent behavior, and knowledge base gaps. Every coaching session surfaces data that, when fed back into the system, improves AI suggestion quality, fills knowledge base holes, and sharpens product feedback.

Sources

Quarterly Journal of Economics, Generative AI at Work — AI productivity gains in customer service teams, https://academic.oup.com/qje/article/140/2/889/7990658

AIHR, How 11 Factors Influence Customer Service Performance — agent satisfaction, coaching, and burnout research, https://www.aihr.com/blog/factors-influencing-customer-service-performance/

IntouchCX, AI in Action: Enhancing Customer Service Through Smarter Training — AI-driven training ROI and agent performance, https://www.intouchcx.com/thought-leadership/ai-in-action-enhancing-customer-service-through-smarter-training/

McKinsey & Company, The Economic Potential of Generative AI — 60 to 70 percent work activity automation potential, https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier

McKinsey & Company, The Next Frontier of Customer Engagement: AI-Enabled Customer Service — AI contact automation and human queue complexity shift, https://www.mckinsey.com/capabilities/operations/our-insights/the-next-frontier-of-customer-engagement-ai-enabled-customer-service